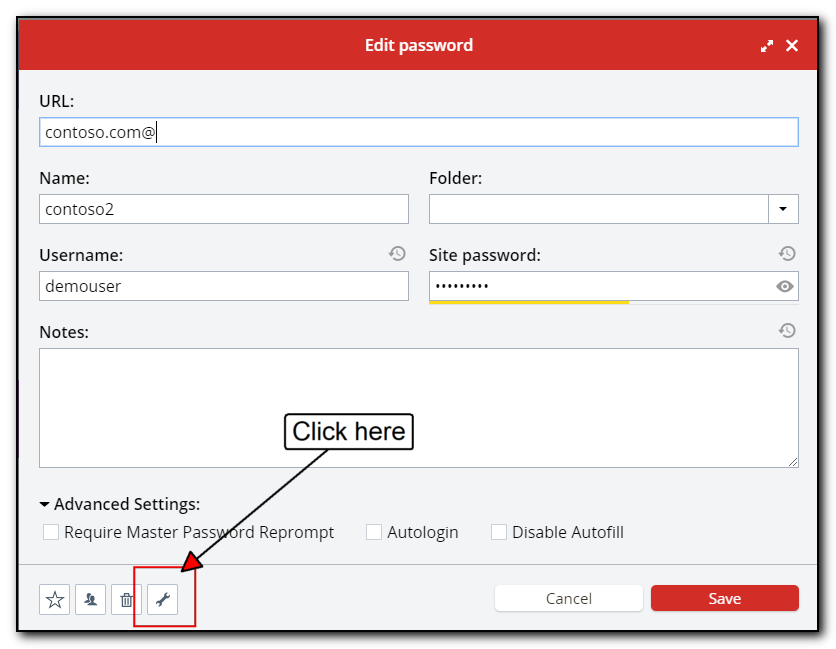

With the growing increase in Passkey usage on websites, it’s getting pretty important to be able to synchronize these between devices. I have at least two computers and two mobile devices that I’d like to be able to sign into a passkey enabled website with the same method rather than using passkeys on one device and username/password on the other.

Keeper has had passkey support for quite a while now, but up until recently, the ability to synchronize to an android 14 device has not worked and in my case, I would get a Google popup that stated, “No passkeys on this device.”

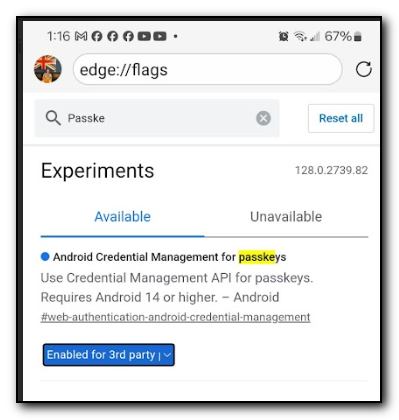

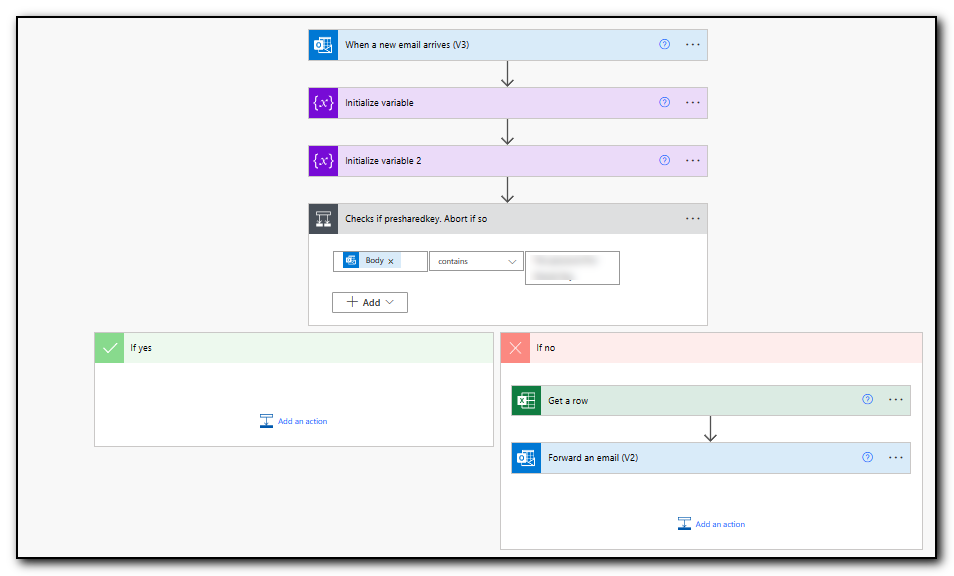

The Keeper instructions show that the M124 flag needs to be updated. However, I found that following those instructions as a base but going to edge://flags and then searching for edge://flags and then searching for Android Credential Management for passkeys, the drop down box allows the selection for Enabled for 3rd party passkeys. Selecting this, I was then able to use the previously saved passkeys in Keeper.

A good site to test this is passkeys.io as this is just a demo site with no secure data that you might end up losing if the passkey doesn’t work – after all you probably don’t want to test this with your email provider! The bonus is that you can use a random mailinator.com email address to test this without providing your real email address.

One of my banks now supports Passkeys – My main bank is unfortunately way behind the curve and doesn’t even support TOTP passwords unless you have a business account with their MFA typically being a SMS text although sometimes they send a push notification to the app on my phone. It’s odd that they don’t understand it is way more secure to use TOTP or push notifications but you should be doing this consistently rather than about 25% of the time.